Introduction

Many people think that accessing AI from languages other than Python is too difficult. Recently, however, a large number of pre-trained models have been published, making it easy to run AI inference from a variety of platforms and programming languages. Here, I would like to run the ONNX runtime and try out the GPT-2 model that generates English sentences from the Ruby language. Inference is easy with ONNX Runtime.

Preparation and installation

Install the following three gems.

- onnxruntime - Ruby bindings for ONNX Runtime.

- tokenizers - Ruby bindings for Tokenizers provided by Hugging Face.

- numo-narray - Ruby matrix library. Equivalent to NumPy.

gem install onnxruntime

gem install tokenizers

gem install numo-narray

The onnxruntime gem already contains binary files for each platform, so you don't need to install the ONNX runtime yourself. Just install the gem and it should work.

The tokenizers gem is written in Rust, so installation requires Rust. If Rust isn't available, blingfire can be used instead.

Also note that the singular "tokenizer" is a different gem.

Get the ONNX model

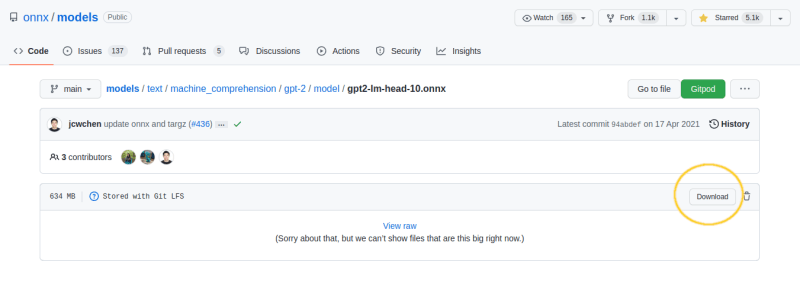

Here we use the GPT-2 model distributed by the ONNX official. Download GPT-2-LM-HEAD from the link.

If you try to download it by right-clicking on the screen above, it will fail because the link destination is html. Click the link once and download the model from the download button on the top right of the next page.

Put gpt2-lm-head-10.onnx in the same directory as the script. You can browse the content of ONNX models using NETRON.

Write Code

Are you ready? Now let's write some Ruby code.

require "tokenizers"

require "onnxruntime"

require "numo/narray"

s = "Why do cats want to ride on the keyboard?"

# Prepare a tokenizer.

tokenizer = Tokenizers.from_pretrained("gpt2")

# Load the model.

model = OnnxRuntime::Model.new("gpt2-lm-head-10.onnx")

# Convert string to matrix.

ids = tokenizer.encode(s).ids

# Get 10 words.

10.times do

o = model.predict({ input1: [[ids]] })

o = Numo::DFloat.cast(o["output1"][0])

ids << o[true, -1, true].argmax

end

# Print the result. Array to string.

puts tokenizer.decode(ids)

The following code will be unfamiliar to many Ruby users. It uses numo-narray, a library similar to NumPy. If you want to know more about what you can do with numo-narray, see here.

o = Numo::DFloat.cast(o["output1"][0])

ids << o[true, -1, true].argmax

Actually, I don't understand exactly what matrix operations do. I'm just translating the NumPy code to numo-narray. But on the other hand, you don't have to be familiar with AI or mathematics to use publicly available AI models. It's like being able to drive a car without being able to repair it yourself.

Run

ruby gpt2.rb

Why do cats want to ride on the keyboard?

The answer is that they do.

This time, we did not call the API on the web, but actually executed Deep Learning on your machine. I hope you found it easier to call Deep Learning models from Ruby than you thought.

Enjoy your Ruby computing. Have a nice day!

Select words probabilistically

The implementation above selects the most probable word. If you want the word selection to be more probabilistic, you can do something like this.

def softmax(y)

Numo::NMath.exp(y) / Numo::NMath.exp(y).sum(1, keepdims: true)

end

outputs = @model.predict({ input1: [[ids]] })

logits = Numo::DFloat.cast(outputs['output1'][0])

logits = logits[true, -1, true]

log_probs = softmax(logits)

cum_probs = log_probs.cumsum(1)

r = rand(0..cum_probs[-1]) # 0..1

(cum_probs < r).count

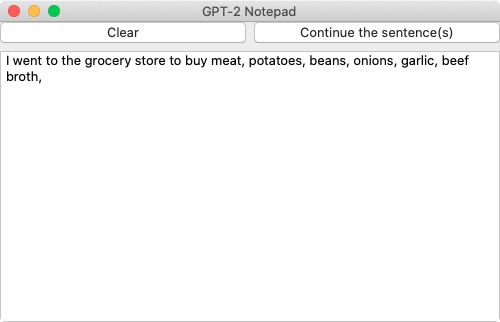

How to Add a GUI

Glimmer DSL for LibUI 0.5.23 ships with new #GPT2 Notepad sample (based on work by @2xijok). It is an #AI text predictor that can predict continuation of any text typed in. Learn more at: github.com/AndyObtiva/gli…

Glimmer DSL for LibUI 0.5.23 ships with new #GPT2 Notepad sample (based on work by @2xijok). It is an #AI text predictor that can predict continuation of any text typed in. Learn more at: github.com/AndyObtiva/gli…

#glimmer #libui #ruby #desktop #gui #opensource #programming twitter.com/RubygemsN/stat…18:44 PM - 10 Sep 2022rubygems_news @RubygemsNglimmer-dsl-libui (0.5.23): Glimmer DSL for LibUI (Prerequisite-Free Ruby Desktop Development GUI Library) - Winner of Fukuoka R https://t.co/CA3Czg02I8